Community resources

Community resources

Using EFS as shared home for BitBucket Data Center on Kubernetes

Hey all.

This is not really a question, as I know the official answer if it was a question:

Q. Can we use AWS EFS as backing for the shared home in a BitBucket Data Center installation

A. No, it's not performant enough.

I understand that EFS is not considered performant enough for workloads such as git. This is due to the nature of git, in that it typically makes many small writes/reads and that EFS has increased per-operation latency due to its distributed nature. It's well documented that EFS is a little lacking in this area compared to more conventional NFS shares. This is despite the continuous improvements AWS make to the service.

I imagine that in larger installs, this may well become a serious problem.

However, I would like to discuss the feasibility of using EFS in smaller BitBucket instances, especially when using EKS, as it seems a natural fit for this purpose, especially if it is sufficient for your particular scenario.

I have just finished a batch of performance tests on our proof of concept instance of BitBucket on EKS, imitating heavy use scenarios that we would not typically see in our production environment.

My finding was that, for our use case and instance size, EFS is easily performant enough for our requirements.

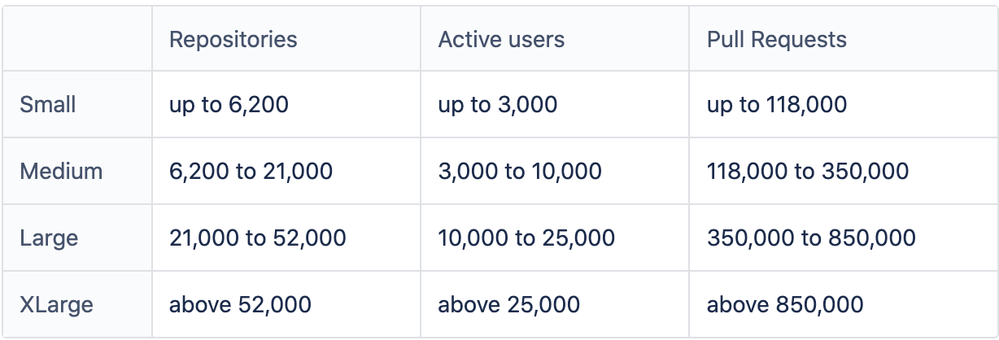

To put a little more context into what I mean by a small instance size, when I look at the BitBucket instance size here (https://confluence.atlassian.com/enterprise/bitbucket-data-center-load-profiles-962360410.html) ours comes out at about 1/10 the size of a 'Small' in all the below metrics.

To measure the performance, I simulated multiple concurrent git clones of our largest repos and was easily able to achieve higher overall sustained throughput with EFS than we ever see on our production instance. This was on repos with many small objects as well as repos with larger objects.

So I guess I do have a question.

Is the reason for not supporting EFS purely based on performance? This would make sense for Xlarge, Large, Medium and maybe even Small instances. But on something 1/10 the size of that (Could we call it XSmall? Micro?), would it be acceptable to use?

Are there other non-performance based reasons to not use EFS for the shared home on BitBucket? Could it break anything other than performance?

The support issue.

If we were to go ahead and use EFS for this, we do understand that it's not officially supported. If we had any performance issues, we could not raise a ticket for them.

On the flip side, in the worst case it would not be terribly difficult to migrate the data stores off of EFS and onto some more performant disk such as EBS. We could do this if we really needed support (Of course, I am talking about after the Helm Charts are officially supported).

Any thoughts on this subject? We would like to proceed in this direction. It would seem inefficient for us to provision some extra clustered NFS service for no tangible benefit, when EFS is sitting there waiting for us :)

Thanks for reading my essay!

Tom...

1 comment

Hi Yevhen, thanks for your response.

So it seems it is primarily a performance thing then. I am surprised about the replication lag you were seeing given that EFS is meant to have close-to-open consistency. I would expect that, so long as the file has been closed and was opened with O_DIRECT, it would be consistent. According to their claims at least.

Regarding my comments about moving to EBS, there was a huge part I left out there. In that scenario, yes, we would only be able to run a single BBDC node. This may seem to make no sense, but there are scenarios where this is acceptable. Consider the following:

- With an instance the size of an ours (XS, Micro, 1/10 scale, whatever you want to call it), one node is more than enough to handle our foreseeable throughput requirements.

- Our RTO/RPO requirements are easily satisfied with with a single 'self-healing' instance on K8s.

- Our existing environment is only a single node with no performance impact.

- BB Server licensing is going away. BBDC is our only on-premises option.

Even though clustering is a major feature of BBDC, it's not the only reason to go that direction. Aside from the fact that it is the only on-premises licensing model now, there are other features that we would benefit from such as LDAP integration and SSO.

Regarding the replication lag you mentioned, if it becomes a problem, we could sacrifice the fault tolerance provided by running in a cluster and run BBDC with EFS in a single node configuration. Then I hear you ask: "If you run with a single node, why even use EFS at all?".

I'm glad you asked! In this scenario, we would still benefit from HA in the event of an AZ outage as we could bring up the pod in any remaining AZ.

With EBS or even with a dedicated EBS backed NFS server (in a single zone, as per the AWS Quickstart Guide), AZ failures would not be tolerated.

It seems there is actually no supported Multi-AZ solution for BBDC. I imagine any Multi-AZ NFS solution would run into similar problems that are faced with EFS?

I think that I now understand the caveats of choosing this solution.

- No support on EFS related issues

- Potentially unable to run with multiple BBDC nodes

- Potentially have to migrate to a single node backed by EBS

- Potentially need to provision some other NFS solution if our throughput requirements increase in the future.

Thanks,

Tom...

Hi @Tom Francis ,

I'd like to add a few more things to the issue of using EFS with Bitbucket Server.

Firstly, the added latency that EFS has applies to every interaction with a Git repository. The number of users or the number of repositories don't necessarily matter that much, but running Bitbucket on EFS will make the whole installation feel sluggish.

For example, Git reads hundreds, or potentially thousands, of files to list all branches in a repository. If each of those file operations has a latency of several milliseconds, just clicking a "branch selector" drop down might take several seconds. This is something that regular performance tests might miss, but makes the experience of using Bitbucket worse.

Additionally, EFS has something called "burst credits", which might help with performance for a while, but eventually those credits will be used up and Bitbucket will slow down even more. See https://aws.amazon.com/premiumsupport/knowledge-center/efs-burst-credits/ for more information on that.

Secondly, if your concern is recovery after an AZ failure, our currently supported solution is documented here: https://confluence.atlassian.com/bitbucketserver/disaster-recovery-guide-for-bitbucket-data-center-833940586.html. Please check if this can satisfy your RTO/RPO requirements.

And thirdly, I want to add to the statement "No support on EFS related issues". Due to the many unknowns using EFS introduces into a system, such as data integrity, odd behavior of locking or unlocking, as well as intermittent errors during file operations, we'll most likely ask any customer currently running on EFS to migrate to a supported solution before we can help out with a support ticket. This applies to any support ticket, whether it appears to be EFS related or not.

Lastly, I want to attempt to provide a potential solution that satisfies your requirements. If you use EBS, you could make use of EBS snapshots for our documented disaster recovery. EBS snapshots are atomic, so they satisfy the requirement of using a replication strategy that guarantees consistency. You can control EBS snapshots from within Kubernetes, if you install the EBS CSI driver: https://aws.amazon.com/blogs/containers/using-ebs-snapshots-for-persistent-storage-with-your-eks-cluster/. Unfortunately we do not have an example for this, but keep an eye on our data-center-helm-charts repository on GitHub in case we add it in the future.

https://github.com/atlassian-labs/data-center-helm-charts

Hope this helps.

Cheers,

Wolfgang

Was this helpful?

Thanks!

- FAQ

- Community Guidelines

- About

- Privacy policy

- Notice at Collection

- Terms of use

- © 2024 Atlassian